With the need for high-quality imaging on the rise in many embedded vision applications such as smart surveillance, digital microscopes, document scanners, industrial handhelds, etc., camera manufacturers today are coming up with high-resolution cameras that can capture even the minute details of a scene or an object. For instance, e-con Systems has a wide portfolio of 4K cameras including See3CAM_160 (16MP autofocus USB camera), See3CAM_CU81 (4K HDR USB camera), and many more.

But with higher resolution and higher framerates, one of the common problems faced in any USB video device is frame corruption. Frame corruption can occur when the host device doesn’t have enough bandwidth to support image processing. However, it can happen even when the device bandwidth is within USB’s maximum bandwidth owing to the processing overhead of the host device. And this is true irrespective of whether the video is in compressed or uncompressed format.

In a compressed format like MJPEG, the compression algorithm is used to reduce the frame size significantly to achieve higher framerates in embedded platforms or low-performance host devices. The host needs to decode these incoming MJPEG frames to further process or render the output at a faster rate.

Decoding this image data in the MJPEG format is a computational overhead, and some hosts will not have the capacity to decode every single incoming frame because of higher frame size, which in turn results in frame drop or frame corruption at the host side. This is a major challenge in decoders like VA-API which uses GPU for decoding high resolution frames. In the VA-API encode/decode application, as more and more high resolution frames come in, eventually the buffer queue overflows in the UVC driver, which leads to frame corruption. When it comes to an uncompressed format like Y8, frame corruption occurs when we stream high-resolution images or video (say 9MP) at a high frame rate such as 30FPS.

In this article, we will have a detailed look at how frame corruption occurs in Linux, and how to fix it by optimizing the UVC host and modifying values in the Kernel source code.

Understanding how frame corruption occurs

Before going into the solution of the problem, let us first understand how frame corruption occurs. To do this, we need to learn how the host decodes the incoming frames from the device. Given below is the step by step details of this process:

- Once the camera has a complete frame captured and ready, it commits the buffer to the host.

- Once the host has an empty buffer, it accepts the committed frame buffer from the device.

- Otherwise, the host returns an error. When this happens, the device drops the current buffer, waits till the buffer is available from the host, and then commits the new frame buffer successfully.

- Due to computational limitations, the host might not always have an empty buffer to accept the buffer from the camera. This is what causes frame drop or frame corruption.

- Since a frame is sent through multiple buffers, a single buffer drop results in frame corruption in the host. Frame corruption can occur in two ways:

- Before the UVC layer where there aren’t enough buffers for the device to accommodate the entire frame. This type of frame corruption is handled by the UVC layer by dropping the entire frame.

- After the UVC layer, due to processing overhead of the decoder used. This also results in frame corruption, and is observed in the host side application.

- To avoid these two types of corruption, the host must have empty buffers to accommodate all the frames from the device. We can achieve this by increasing the queue size on the driver side so that there are enough frame buffers that can store the incoming frame.

Fixing frame corruption in Linux

In the previous section, we learnt how frame drop or frame corruption occurs. Let us now look at how this challenge in Linux hosts can be addressed in detail.

Optimizing UVC host driver in Linux

By modifying the UVC host driver buffer parameters, frame corruption can be efficiently handled. The problem can be significantly reduced by increasing the number of URBs (USB Request Block) used and increasing the packet size of each URB in the UVC driver side.

A URB is what helps the Linux kernel communicate with all the USB devices. It is nothing but a structure which is used to pass information between the host kernel and the connected device. All data and control requests are stored in the URB queue.

When streaming – a high resolution video at a high frame rate like – 4K@30FPS using gstreamer, the vaapijpegdec decoder often stops accepting corrupted frames. While the decoder is busy decoding the frame, the subsequent frames are stored in the URB. However, when there is a delay in the host process, the URB queue also overflows. When this happens, the incoming frame overwrites the previous frame data which results in frame corruption.

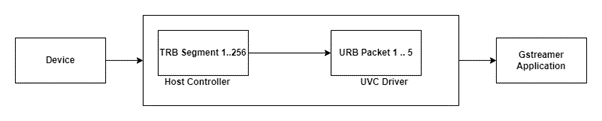

The below diagram represents how this default USB transaction happens in the kernel.

Now, on receiving these corrupted frames, the vaapijpegdec decoder fails to decode and stops abruptly. This results in the failure of the gstreamer pipeline. The USB queue size is typically high (TRB segment 256) which can prevent frame corruption. But if the queue size in the UVC driver (URB 5) is not adequate, frame corruption still occurs. To avoid this issue, the queue size of the driver (UVC URB size and packet size) needs to be increased.

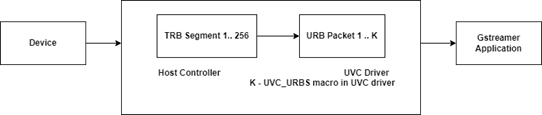

The following diagram depicts how the USB transaction looks like after increasing the queue size.

Another method to address this challenge is to increase the packet size of each URB. For example, See3CAM_CU135 from e-con Systems (a 4K USB camera board) has a default DMA buffer size of 36KB while the default URB packet size is 32KB. This would mean that we would need two URBs to completely transfer a DMA buffer as 32KB and 4KB URB packets.

However, this leads to wastage since only a fraction of the second URB is being utilized (4KB out of 32 KB). This in turn leads to an increase in the number of URB fillings in the queue.

So, to use this technique efficiently, we need to specify the maximum packet size of the URB as 64KB. By doing so, we can accommodate an entire 36KB DMA buffer in a single URB packet instead of using two URB packets. This further decreases the queue filling size.

By doing these two modifications, the host can overcome the frame corruption issue. These changes must also be updated in the uvcvideo driver module of the kernel source package. Let us now see how that is done.

Modifying the values in kernel source code

The uvc driver can be found in the location /linux-5.11.0/drivers/media/usb/uvcvideo/. By navigating to this location, you can see various driver specific files. To change the maximum packet size of the URB, we need to follow the below mentioned steps:

- Open the uvcvideo.h file and change the macro “UVC_URBS” parameter to a higher value. Say if the value by default is 5, increasing the value from 5 to a higher range depending on the frame size will improve the performance and significantly reduce frame corruption.

- The packet size can be modified by changing the “UVC_MAX_PACKETS” parameter to a higher value with power of 2. Say if the value by default is 32, increasing the value to 64 can improve the performance if the device attached has a buffer size greater than 32KB.

- After changing these values, open the terminal in the root directory of the source code package and run the following command:

- sudo make ARCH=x86_64

- After the command is executed without any issues, just copy the changed ko file to the root driver directory by executing the following command:

- sudo cp /drivers/media/usb/uvc/uvcvideo.ko /lib/modules/5.11.22/kernel/drivers/media/usb/uvc/uvcvideo.ko

- After copying, restart the PC to reflect the modifications done to the driver.

Hope this article gave you a detailed understanding of how to fix one of the most common challenges in high resolution imaging – frame corruption in Linux. If you have any further queries on the topic or are looking for help in choosing and integrating a camera into your device, please write to us at camerasolutions@e-consystems.com.

4K and high resolution cameras from e-con Systems

e-con Systems is a leading embedded camera company with 18+ years of experience and expertise in the space. e-con’s wide portfolio of cameras includes many 4K and high resolution cameras with some of the most popular Sony and Onsemi sensors. Following is a comprehensive list of e-con’s high resolution cameras.

- See3CAM_160: 16MP autofocus USB camera

- See3CAM_130: 13MP autofocus USB camera

- See3CAM_CU135: 4K USB camera board

- See3CAM_CU135M: 13MP monochrome USB camera

- See3CAM_CU130: 4K ultra-HD USB camera

- e-CAM180_CUMI1820_MOD: 18MP MIPI camera module

- See3CAM_CU81: 4K HDR USB camera

- e-CAM83_USB – 4K High resolution HDR USB camera

- e-CAM83_CUMI415_MOD: Sony STARVIS IMX415 4K low light camera module

To have a complete look at e-con Systems’ camera portfolio, please visit our Camera Selector.

Vinoth Rajagopalan is an embedded vision expert with 15+ years of experience in product engineering management, R&D, and technical consultations. He has been responsible for many success stories in e-con Systems – from pre-sales and product conceptualization to launch and support. Having started his career as a software engineer, he currently leads a world-class team to handle major product development initiatives