Recently, NVIDIA launched the much hyped DeepStream SDK to the public. The NVIDIA DeepStream SDK taps into the versatility of the GStreamer framework to provide a modular architecture for developers to design Intelligent Video Analytics using Artificial Intelligence.

The DeepStream SDK allows for constructing graph like pipelines for connecting different processing plugins required to run a Deep Neural Network with minimal latency and overhead. With this level of flexibility, it is easy to design complex end user applications.

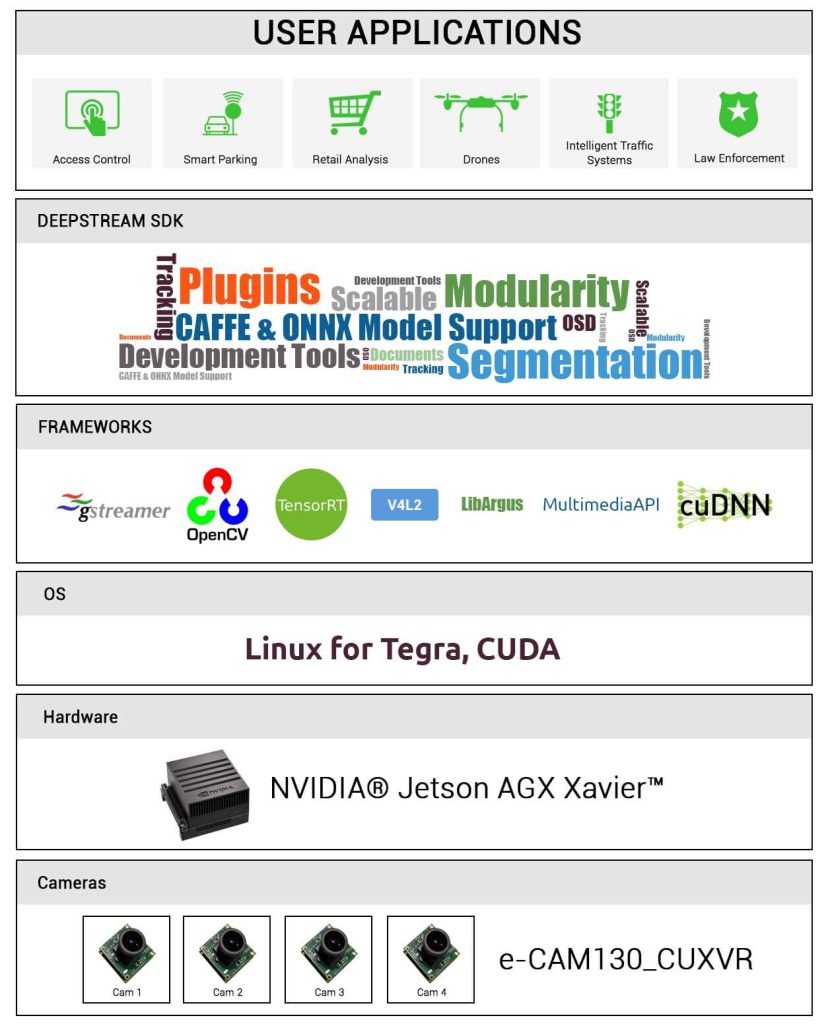

Fig 1 : Software and Hardware stack for DeepStream SDK 3.0 (© Copyright: e-con Systems™)

Here are some of the gstreamer plugins and their functionalities supported by DeepStream SDK 3.0 for the NVIDIA Jetson AGX Xavier:

| Plugin Name | Plugin Description |

| nvinfer | Core of the DeepStream SDK allowing to access the GPU to run DNN models via TensorRT libraries |

| nvtracker | Supports tracking of a detected object using CPU or VIC |

| nvstreammux | Enables batching multiple input streams from different sources to a single stream for parallel processing in later stages of the pipeline. eg. Nvinfer |

| nvstreamdemux | Allows to separate the processed single stream of data to separate sources based on the source_id metadata present in the buffers. |

| nvmultistreamtiler | This plugin is used to create a 2D tile of images of size = rows x columns from either batched buffers or a single input source. |

| nvosd | Facilitates drawing of bounding boxes and text in the image stream based on the metadata from a previous element in the graph. |

| nvdsexample | Template plugin for integrating custom algorithms in DeepStream SDK graph. |

Table 1 : Sample plugins in DeepStream SDK 3.0

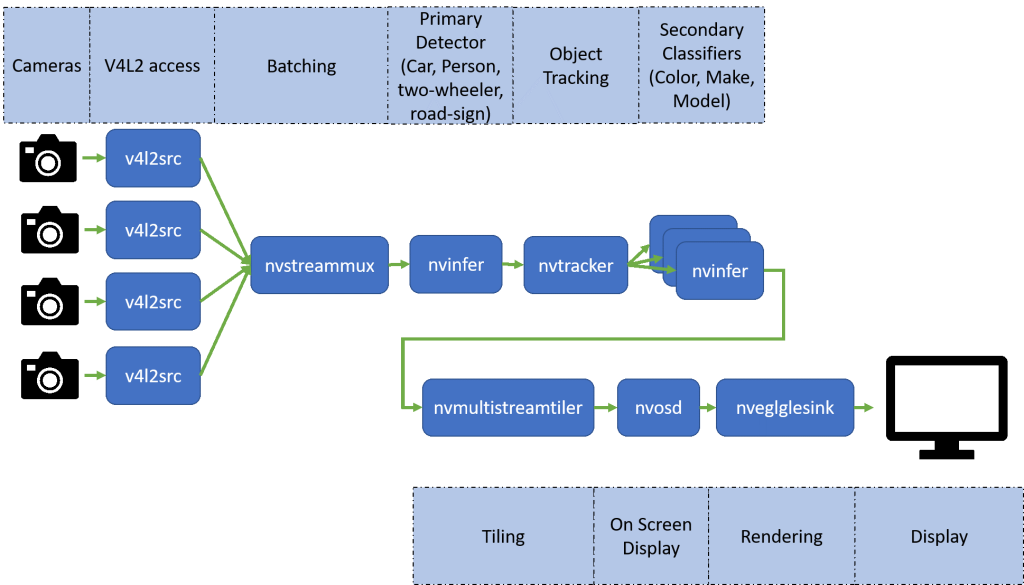

The default graph used in DeepStream SDK 3.0 reference application allows to decode from a file source and run an inference engine on the video stream. We tried modifying the graph a little bit to support different live camera sources such as RAW bayer cameras and YUYV cameras. The following figure shows the graph we ended up with :

Fig 2 : Architecture of e-con’s configuration for e-CAM130A_CUXVR (© Copyright: e-con Systems™)

We have a simple configuration file which can be used if you wish to use our e-CAM130A_CUXVR – Four Synchronized 4K Cameras for NVIDIA Jetson AGX Xavier along with DeepStream for developing your own IVA application. This configuration allows you to stream the 4 cameras simultaneously at 1080p 60 fps and still perform object detection without any frame loss.

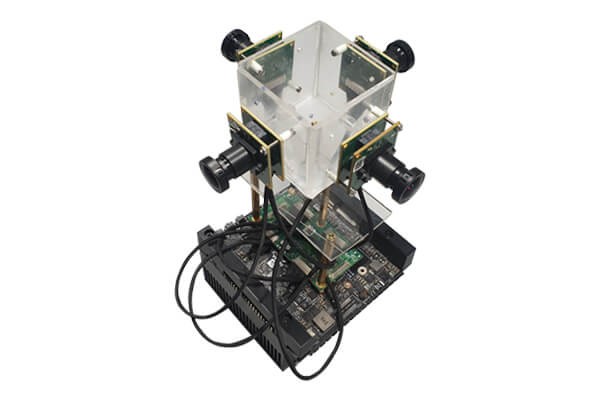

Fig 3 : e-CAM130A_CUXVR connected to the Jetson AGX Xavier

The e-CAM130A_CUXVR is a synchronized multi camera solution for NVIDIA Jetson AGX Xavier development kit that has up to four 13 MP 4-Lane MIPI CSI-2 camera boards. Each camera is based on the e-CAM137A_CUMI1335_MOD, which uses a 1/3.2″ AR1335 color CMOS image sensor from ON Semiconductor® and integrated high performance Image Signal Processor (ISP). All these cameras are connected to the base board using 30 cm micro-coaxial cable. These 4-lane MIPI camera modules can be streamed in 4K resolution at 30 fps, which will be best fit for a high end multi camera solution.

To get started with DeepStream on our cameras is very easy. All one needs to do are the following:

- Make sure to install our camera drivers in the Jetson AGX Xavier (provided with our release package).

- Follow the DeepStream SDK installation based on the official documentation from NVIDIA.

- Move the configuration file provided by e-con to the following location : <deepstream_install_dir>/deepstream_sdk_on_jetson/samples/configs/deepstream-app/

- Then run the following command to get a DNN up and running which detects and tracks different objects such as cars, road signs, bicycles and people.

$ deepstream-app -c e-CAM130A_CUXVR_1080p_4cams_primary_and_secondary_detectors.txt

Also, one can easily modify the configuration file to support USB cameras such as the e-con Hyperyon® which supports 1080p@60 fps in H.264 format. Here is a video of DeepStream running on the Jetson AGX Xavier with the e-CAM130A_CUXVR.

Kindly contact sales@e-consystems.com to know more about the configuration.

Update 30-Apr-2019 : The configuration file can be directly downloaded here:

e-CAM130A_CUXVR_1080p_4cams_primary_and_secondary_detectors.txt

Dilip Kumar is a computer vision solutions architect having more than 8 years of experience in camera solutions development & edge computing. He has spearheaded research & development of computer vision & AI products for the currently nascent edge AI industry. He has been at the forefront of building multiple vision based products using embedded SoCs for industrial use cases such as Autonomous Mobile Robots, AI based video analytics systems, Drone based inspection & surveillance systems.