In this blog, you’ll explore:

- Why traditional accident investigations miss the full event sequence

- How continuous visual records improve liability review and reconstruction

- Usage of synchronized multi-camera footage in crash timeline validation

- Impact of AI-based crash analytics on road safety planning

Every year, over 1.19 million road fatalities occur worldwide, as reported by the WHO. However, the investigations that follow tend to rely on incomplete foundations. For instance, dash cam footage covers only one angle. The result is a gap between what actually happened and what can be proven.

Vision-based crash intelligence brings together real-time image capture, AI-powered analytics, and multi-source synchronization so that modern road safety initiatives can leverage verifiable, data-driven accountability. This has the potential to change how agencies investigate and how cities design safer infrastructure.

In this blog, you’ll explore why traditional post-accident investigations fall short, the way visual evidence transforms the process, and what smarter road safety looks like in practice.

3 Reasons Why Traditional Post-Accident Investigations Fall Short

- Most accident investigations begin too late and rely too much on subjective sources. By the time investigators arrive at a scene, critical physical evidence has been disturbed, removed, or degraded by weather and traffic. What remains is largely interpretive.

- Witness testimony may be unreliable. Cognitive research consistently shows that under stress, human memory encodes events selectively. Bystanders recall different details, misremember sequencing, and unconsciously fill gaps with assumptions. When accounts conflict (which they frequently do), investigators have little objective basis for resolution.

- Physical reconstruction has its own limitations. Skid marks help estimate braking speed, but only if the road surface is consistent and the marks are preserved. Damage patterns indicate impact force, but not the exact sequence of events. Neither approach captures driver behavior, signal states, pedestrian movement, or environmental conditions in the seconds before impact.

The consequences of these challenges are:

- Insurance claims take weeks or months to resolve due to disputed liability.

- Fleet operators can’t identify if an incident was caused by driver error, vehicle condition, or external hazard.

- Urban planners lack the granular data to target infra improvements effectively.

- Legal proceedings rely on circumstantial evidence

How Visual Evidence Transforms the Investigation Process

Embedded vision systems mounted across vehicles, intersections, and transport corridors maintain a continuous visual record before, during, and after an incident. They neither degrade over time nor can be displaced by an emergency response. They capture what happened, where it happened, and in what sequence, with verifiable timestamps attached to every frame.

Investigators no longer need to reconcile conflicting accounts. Instead, they can verify events directly. Moreover, liability assessments that previously required weeks of documentation can be resolved through structured video review.

Behavioral data, such as gaze direction or eyelid movement before impact, also becomes part of the factual record. Its value extends beyond individual incidents. For instance, a single collision yields one data point. Thousands of incidents, recorded and indexed across a fleet or road network, yield a pattern.

That’s why high-resolution footage from strategically placed cameras is expected to capture the crash, the environment, the traffic flow, road conditions and the signal states. It helps create a broader understanding of where and why crashes occur.

Modern camera systems include front-facing, rear-view, surround-view, driver monitoring, and roadside units. Each camera type contributes a different layer of this record. When combined, they eliminate blind spots in both space and time, creating a forensic foundation that purely physical evidence can’t match.

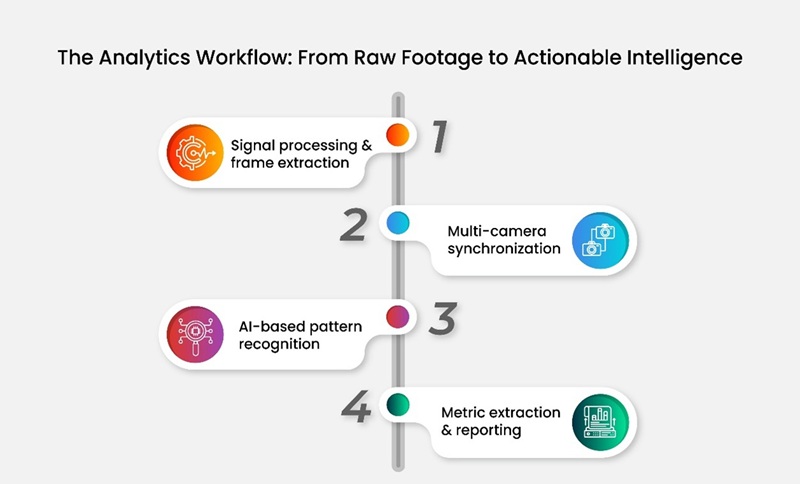

The Analytics Workflow: From Raw Footage to Actionable Intelligence

Step 1: Signal processing and frame extraction

Image Signal Processors (ISPs) handle the first layer of transformation. Raw sensor output is processed for exposure, noise reduction, and dynamic range correction. This is critical in high-contrast scenarios like early morning collisions or nighttime incidents under artificial lighting. Every frame is tagged with spatial metadata, such as GPS coordinates and timestamps, which are accurate to the millisecond.

Step 2: Multi-camera synchronization

Footage from multiple sources (inside the cabin, around the vehicle perimeter, or from nearby infrastructure cameras) is aligned into a unified timeline. Synchronization resolves perspective conflicts, confirming if a driver had begun braking before impact or whether a pedestrian entered the road before or after a signal change. This temporal alignment is what separates crash intelligence from individual video review.

Step 3: AI-based pattern recognition

Trained AI models process the synchronized data to detect and classify objects, movement trajectories, and behavioral indicators. It means that:

- Vehicle paths are tracked frame by frame

- Driver gaze and head position are analyzed for signs of distraction or fatigue

- Pedestrian and cyclist movements are identified and correlated with vehicle response times

Step 4: Metric extraction and reporting

Processed data is converted into measurable outputs: approach angle, relative velocity, reaction time, collision sequence, and environmental conditions at the moment of impact. These metrics feed directly into incident reports, insurance documentation, and fleet management dashboards.

Benefits of Integrating Crash Intelligence into Road Safety Initiatives

- Faster investigation and claim resolution: Time-stamped, frame-level footage replaces fragmented accounts. So, insurance assessors, legal teams, and transport authorities can review verified evidence rather than spending weeks reconciling conflicting reports.

- Improved reconstruction accuracy: Synchronized visuals provide a complete spatial and temporal record. Analysts can measure vehicle trajectories, determine impact timing, and verify signal states with a level of precision that physical reconstruction can’t approach.

- Strengthened legal and insurance transparency: Objective, verifiable footage removes ambiguity from liability disputes. Hence, insurers gain a clear factual basis for claim assessment while legal proceedings move faster.

- Targeted driver training: Replay data from real incidents highlights specific behavioral failures like delayed braking, insufficient mirror checks, and inattention before lane changes. Fleet operators can build training modules around documented patterns while avoiding generic scenarios.

- Data-driven infrastructure improvements: Aggregated visual data from high-incident zones reveals design failures, visibility gaps, and signal timing errors that contribute to crash risk. Urban planners receive actionable input for intersection redesign, signage upgrades, and lighting improvements.

- Predictive risk modeling: Over time, visual datasets aggregated across fleets and road networks reveal behavioral and environmental patterns that precede crashes. Safety teams can intervene before incidents occur.

Smart Road Safety: Moving from Reactive to Predictive Systems

The traditional model of road safety is reactive. Crash intelligence enables a proactive approach built on continuous data collection and predictive risk management.

When camera networks are integrated with traffic management systems, the data generated feeds live dashboards, flags behavioral anomalies in real time, and populates risk maps that transport agencies use for ongoing planning.

Fleet operators can easily move from incident-based reviews to continuous behavioral monitoring. They receive pattern-based alerts when behavior across the fleet begins trending toward risks such as:

- Increased hard-braking frequency

- Rising late-response incidents

- Deteriorating compliance at specific intersections

At the city level, smart road safety requires integrated data from multiple camera-based devices like intersection monitors, ANPR systems, and in-vehicle units into a single platform. Only then can planners and emergency services get a shared operational picture.

State-Of-The-Art ITS Cameras by e-con Systems

e-con Systems has been designing, developing, and manufacturing OEM and ODM camera solutions since 2003. Our high-performance Intelligent Transportation System (ITS) cameras deliver high-quality imaging across the full spectrum of road safety applications (live traffic monitoring, violation detection, post-accident reconstruction, predictive analytics, and more).

Key imaging features:

- Global shutter and HDR

- Low-light optimization

- Multi-camera synchronization

- GigE and GMSL interfaces

- Rugged IP-rated enclosures (following NEMA-TS2, FCC Part 15, NDAA, and BABA compliance standards)

- Native compatibility with NVIDIA Jetson and other platforms for real-time analytics

Explore our complete ITS camera portfolio

Use our Camera Selector to explore our end-to-end portfolio

You can also write to our experts at camerasolutions@e-consystems.com if you’d like to discuss the right imaging solution for your ITS application.

FAQs

Why do many post-accident investigations end in disputes?

Investigators often reach the scene after physical traces have shifted, weather and traffic have altered the site, and witness memory varies under stress. Skid marks and vehicle damage help, yet they rarely show driver behavior, signal states, pedestrian movement, or the sequence leading into impact.

How does visual evidence change crash investigations?

Continuous camera coverage across vehicles, intersections, and transport corridors preserves a time-stamped visual record before, during, and after impact. Investigators can verify sequence, location, and surrounding conditions.

Why does multi-camera synchronization matter in crash intelligence?

Synchronization aligns footage from cabin cameras, vehicle perimeter cameras, and roadside units into one timeline. This helps investigators confirm braking timing, signal changes, pedestrian entry, and vehicle response from multiple viewpoints, which gives the incident a far richer evidentiary base.

What type of insights can AI extract from crash footage?

AI models can track vehicle paths frame by frame, examine driver gaze and head position for distraction or fatigue, and correlate pedestrian or cyclist movement with vehicle response times. The processed output can then be converted into metrics such as reaction time, relative velocity, approach angle, environmental conditions, and collision sequence.

How does crash intelligence help road safety programs beyond a single incident?

When visual data is aggregated across fleets or road networks, agencies and operators can spot recurring patterns such as hard braking, late response, visibility gaps, poor signal timing, or recurring risk at particular intersections. That insight can impact driver training, claim review, infrastructure upgrades, live monitoring, and predictive risk mapping.

Dilip Kumar is a computer vision solutions architect having more than 8 years of experience in camera solutions development & edge computing. He has spearheaded research & development of computer vision & AI products for the currently nascent edge AI industry. He has been at the forefront of building multiple vision based products using embedded SoCs for industrial use cases such as Autonomous Mobile Robots, AI based video analytics systems, Drone based inspection & surveillance systems.