Designing an ROS 2-based autonomous docking system is only half the story. Deploying it in the real world is another matter entirely. Since real industrial environments don’t cooperate with textbook assumptions, understanding these challenges will help you assess what ‘robust’ really means in this context.

In part 1 of this blog series, you learned about the architecture of a ROS 2-based autonomous docking system through e-con Systems’ latest project. You saw how different components work together to empower mobile robot fleets.

Interested in implementing Autonomous Docking System for your robots?

Access the complete source code and implementation reference to get started.

In part 2, you’ll get expert insights into the engineering challenges that determine whether a docking system works reliably in production, and how you can overcome them.

Let’s explore these practical issues one by one.

How e-con Systems Solves 5 Key Autonomous Docking Challenges

1) Real-time ArUco detection on resource-constrained hardware

Challenge:

Standard ArUco implementations may not be optimized for the low-compute embedded environments typical of industrial mobile robots. It leads to detection lags that affect docking alignment accuracy.

Our solution:

e-con Systems’ dedicated, real-time optimized ArUco detection package comes with an efficient image processing pipeline. It is created as a modular, standalone component decoupled from docking control logic and reusable across other applications.

Business impact:

- Reliable real-time detection on embedded hardware without a dedicated GPU

- Adaptable to docking, localization, inspection, and other marker-based applications

- Fully configurable detection parameters to suit different hardware and environments

2) Precise docking under navigation offset

Challenge:

Nav2 path following may be optimized for general navigation, not sub-centimeter final positioning. In that case, even small yaw or lateral errors on arrival at the docking zone could cause docking failure.

Our solution:

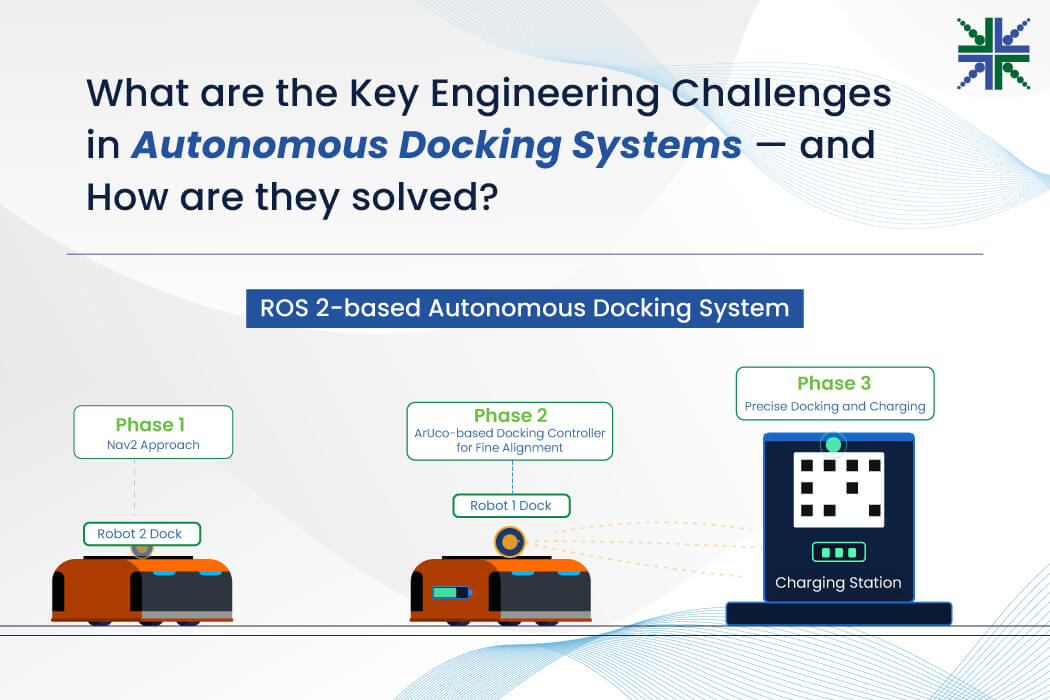

e-con Systems implements a two-phase approach. First, Nav2 delivers the robot to the docking zone. Then, the ArUco-based docking controller takes over for fine alignment, independently correcting heading and lateral error by using marker detection and LiDAR.

Business impact:

Business impact:

- Works with the standard Nav2 stack without requiring precision path tuning

- Robust docking even in environments where exact path repeatability is not guaranteed

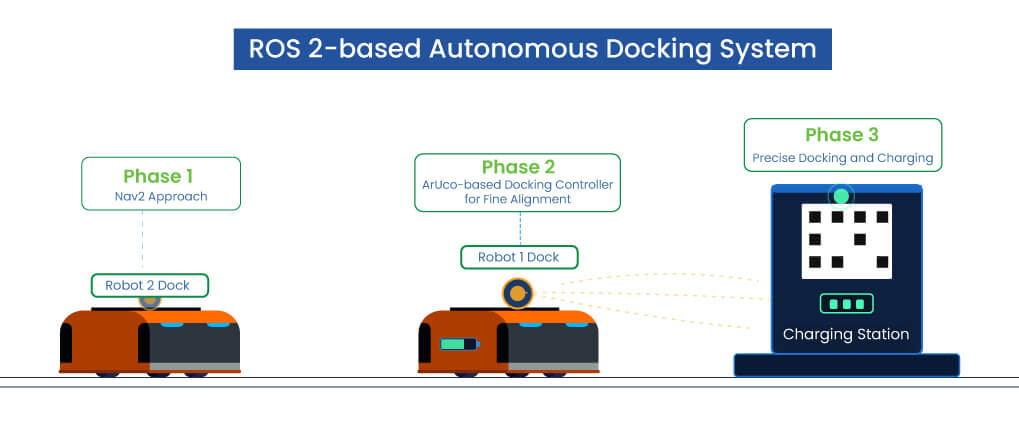

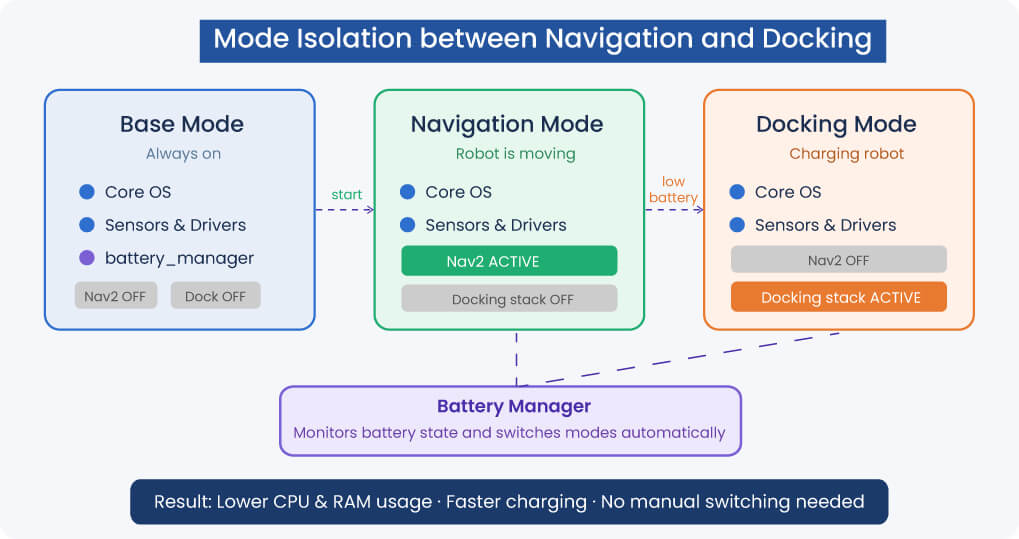

3) Mode isolation between navigation and docking

Challenge:

Running the full Nav2 stack during docking can waste CPU and RAM and cause interference between navigation cost maps and docking sensor logic.

Our solution:

Our system uses three mode-based services (base, nav, and docking) to cleanly start and stop the Nav2 and docking stacks as separate services. The battery_manager automates all transitions based on battery state. Based on your stack and requirements, you can write your own boot scripts to bring up the appropriate service on startup.

Business impact:

- Lowered CPU and RAM usage during docking

- Faster charging cycles with no Nav2 overhead

- Clean, automated mode transitions with no manual intervention

4) Power-glitch recovery in real-world environments

Challenge:

Power interruptions, loose pins, and physical disturbances may break charging mid-cycle, leaving robots stranded without supervision.

Our solution:

We ensure the charging loop is supervised by a state machine that detects power loss events. Then, it automatically triggers a full undock, backup, recovery delay, realign, and redock sequence without operator involvement.

Business impact:

- Fewer failed-charge cycles in real-world deployments

- Higher fleet uptime in noisy industrial environments

- Reduced need for manual rescue operations

5) Multi-robot namespace scaling

Challenge:

Managing docking configuration, topics, and services for multiple robots in the same environment can become complex and error-prone without a clean architectural pattern.

Our solution:

This docking system uses the same namespace-aware ROS 2 architecture as the multi-robot mapping stack. Each robot’s docking nodes, topics, and services are automatically scoped under its namespace, enabling independent docking cycles across a shared infrastructure.

Business impact:

- Scales to any fleet size with minimal configuration

- Each robot manages its own docking cycle independently

- Clean, modular architecture that is easy to extend and maintain

From Simulation to Robots: Vision for Autonomous Docking Systems

One of the practical engineering goals of this system is to minimize the gap between simulation and physical deployment. This ROS 2 Autonomous Docking System is developed and validated in a simulation environment, and its architecture is deliberately designed so that transitioning to a physical robot requires minimal changes.

The combination of the optimized ArUco detection package, LiDAR approach sensing, and the retry-based state machine provides the robustness needed to handle typical imperfections of real industrial environments, such as:

- Uneven floors

- Lighting variation

- Network latency

- Mechanical tolerances in charging connector alignment

How e-con Systems Enables Autonomous Docking Vision

Since 2003, e-con Systems has been designing, developing, and manufacturing OEM and ODM camera solutions. We offer several cameras that are perfect for vision-based autonomous mobile robots. Some of the capabilities that make our cameras a strong fit for autonomous docking systems are:

- Wide dynamic range imaging

- ISP-tuned for embedded platforms

- ROS 2-ready

- Production-grade reliability

- Custom camera design services

To explore our full portfolio, visit our Camera Selector page.

And if you are building or evaluating an autonomous docking system and want to discuss the vision requirements, please write to camerasolutions@e-consystems.com.

FAQs

- What challenge does real-time ArUco detection create on resource-constrained hardware?

Standard ArUco implementations can create detection lag in low-compute embedded environments used by industrial mobile robots. That lag can affect docking alignment accuracy. e-con Systems’ solution is a dedicated real-time optimized ArUco detection package with an efficient image processing pipeline, built as a modular, standalone component decoupled from docking control logic and reusable for other marker-based applications.

- How does the system handle precise docking when the robot reaches the docking zone with yaw or lateral error?

The system uses a two-phase approach. First, Nav2 delivers the robot to the docking zone. Then, the ArUco-based docking controller takes over for fine alignment and independently corrects heading and lateral error by using marker detection and LiDAR.

- Why does the docking system isolate navigation mode from docking mode?

Running the full Nav2 stack during docking can waste CPU and RAM and can also cause interference between navigation cost maps and docking sensor logic. To address this, the system uses three mode-based services: base, nav, and docking. The Nav2 and docking stacks start and stop as separate services. The battery_manager automates transitions based on battery state, and custom boot scripts can bring up the right service at startup based on the stack and requirements.

- What happens when charging breaks mid-cycle because of power interruptions or physical disturbances?

The charging loop is supervised by a state machine that detects power loss events. When a disruption occurs, the system automatically triggers a full undock, backup, recovery delay, realign, and redock sequence without operator involvement. It helps reduce failed-charge cycles, improves fleet uptime in noisy industrial environments, and cuts down manual rescue operations.

- How does the docking architecture scale across multiple robots in the same environment?

The docking system uses the same namespace-aware ROS 2 architecture as the multi-robot mapping stack. Each robot’s docking nodes, topics, and services are scoped under its own namespace, which enables independent docking cycles across shared infrastructure. Such architecture supports fleet scaling with minimal configuration and keeps the system modular and easier to extend and maintain.

Arun is a seasoned Embedded Vision leader with 11+ years of experience driving innovation in robotics and AI-powered imaging systems. He focuses on developing perception stacks that power intelligent robotic systems, bridging hardware, algorithms, and edge deployment.