Key Takeaways

- Usage of 3D CW iToF depth cameras across agricultural robots

- Imaging for ground profiling and spacing measurement during field navigation

- How depth data improves fruit height estimation, produce separation, and picking approach

- Benefits of e-con Systems’ DepthVista Helis in smart agriculture systems

Smart agriculture places robots in open fields where visual conditions change hour by hour. Crop rows bend, soil height varies, and plants overlap as they grow. Color-based vision struggles once shadows, dust, and dense foliage enter the scene. Depth perception becomes the factor that determines whether a machine keeps moving or loses alignment.

Row detection and crop harvesting form the core of agricultural autonomy. A robot first needs depth data to identify row position, spacing, and ground variation. The same depth information then guides harvesting, where fruit location, height, and reach distance drive every picking action. Small depth deviations at either stage can cascade into missed rows or damaged produce.

This is where 3D Continuous-Wave iToF depth sensing from e-con Systems enters smart agriculture workflows. In this blog, you’ll learn how 3D CW iToF depth enables row detection in open fields and improves the performance of crop harvesting tasks.

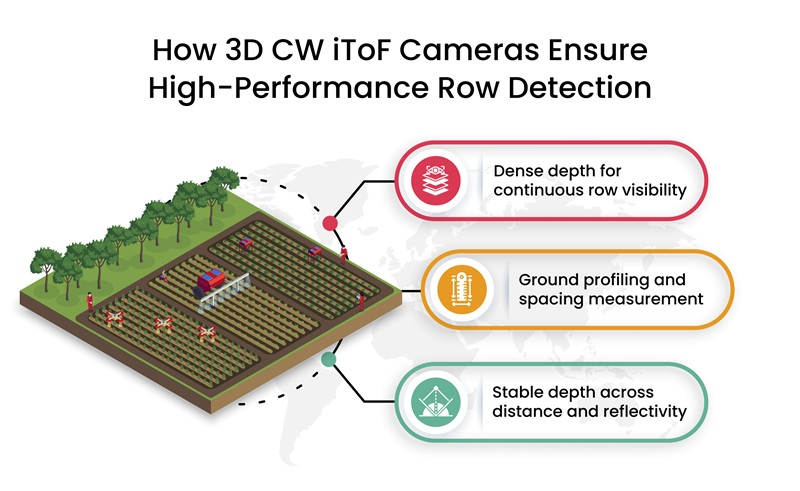

How 3D CW iToF Cameras ensure High-Performance Row Detection

Row detection in smart agriculture depends on consistent depth data across soil, stems, and foliage that change shape and position through the day. 3D Continuous-Wave iToF measures depth directly through phase calculation of modulated infrared light, producing Z-axis depth that stays stable when color and texture vary.

A 3D CW iToF camera’s depth-first approach gives agricultural robots a dependable way to read field structure before any harvesting action begins.

Dense depth for continuous row visibility

High pixel density in a 3D CW iToF sensor captures crop rows as continuous depth structures rather than broken segments. Narrow gaps between plants, thin stems, and uneven spacing remain visible in the depth map. This reduces row loss during navigation when crops grow irregularly or overlap.

Ground profiling and spacing measurement

3D CW iToF depth maps represent ground height, slope, and row spacing in a single frame. Variations in soil elevation register as gradual depth changes instead of visual noise. Agricultural robots use this information to maintain alignment between rows while accounting for ridges, furrows, and wheel tracks.

Stable depth across distance and reflectivity

Dual-frequency CW iToF operation improves signal quality across longer distances in open fields. Depth readings remain consistent across dry soil, moist ground, and leaf surfaces that reflect light differently. Such stability keeps row detection reliable as robots move across large plots during planting, monitoring, or harvesting passes.

Original Scene

IR Output

Depth Output

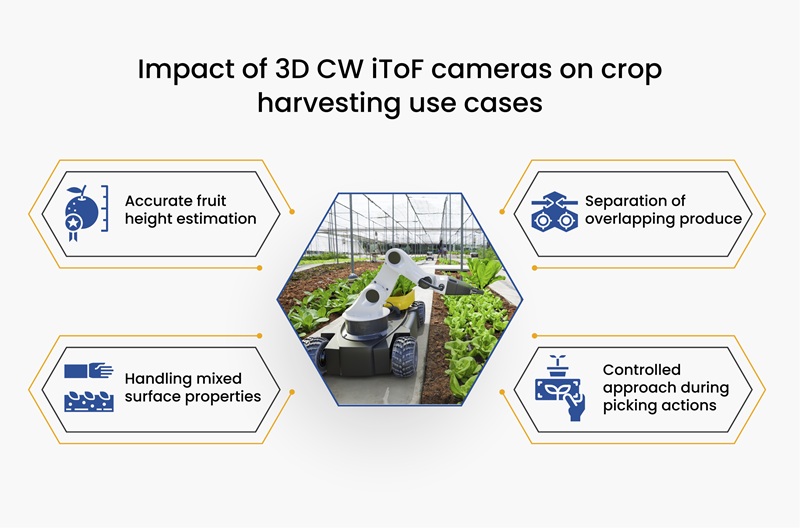

How 3D iToF Depth Perception Plays a Key Role in Crop Harvesting

Crop harvesting changes the depth challenge from wide-area perception to close-range accuracy. Once a robot reaches the crop row, it must judge fruit position, height, and separation with minimal tolerance for error. 3D Continuous-Wave iToF depth delivers direct depth measurement at the point of interaction, which keeps harvesting actions aligned with real crop geometry rather than visual estimates.

Impact of 3D CW iToF cameras on crop harvesting use cases

- Accurate fruit height estimation: High-frequency modulation improves close-range depth precision. Small height differences between fruit, stems, and branches register clearly in the depth map. This keeps the picking tools aligned during approach and grasp.

- Handling mixed surface properties: Crops present varied reflectivity, from matte leaves to glossy fruit skins. Programmable configuration contexts switch sensor settings for different surface responses. It means that depth data remains usable across mixed materials during harvesting.

- Separation of overlapping produce: Closely clustered fruits often overlap in color images. High-resolution 3D iToF depth reveals separation through small depth discontinuities. Hence, the robot can identify individual targets instead of treating clusters as a single object.

- Controlled approach during picking actions: Depth updates occur directly at the camera level, keeping motion decisions tied to live distance data. As the gripper moves closer, depth values adjust in real time. It reduces overreach and missed picks during harvesting cycles.

e-con Systems’ Depthvista Helix Offers New-Gen Agricultural Vision

e-con Systems has been designing, developing, and manufacturing OEM cameras since 2003. DepthVista Helix is our brand-new 3D CW iToF camera module, powered by onsemi’s AF0130 CMOS ToF sensor. It is perfect for smart agriculture systems, delivering high-resolution depth data at 1.2MP @ 60fps and VGA @ 30fps. DepthVista Helix also comes with on-camera depth computation, a high accuracy depth range of 0.2m to 6m (with +1% accuracy).

DepthVista Helix can be customized with multiple VCSEL illumination configurations, including a 4-VCSEL option for outdoor deployments, extending usable depth sensing up to 6 meters in open-field environments. The camera can also be offered with an optional RGB sensor alongside depth output. This provides the ability to simultaneously capture visual information and 3D depth data within the same sensing pipeline.

Explore our smart agricultural vision

See all e-con Systems’ ToF cameras

Use our Camera Selector to view our complete portfolio.

Looking for help in choosing the right 3D iToF camera for your smart agriculture system? Please write to camerasolutions@e-consystems.com. Our team of vision experts will be happy to help you.

FAQs

Why does smart agriculture vision rely on 3D CW iToF depth instead of regular cameras?

In open agricultural fields, visual conditions shift constantly due to sunlight, dust, overlapping plants, and uneven ground. These changes reduce the reliability of color and texture cues that regular cameras depend on. 3D CW iToF approaches the problem differently by measuring physical depth through infrared phase calculation, which keeps spatial understanding intact even when visual appearance varies across the field.

How does 3D CW iToF depth improve crop row detection in real field conditions?

Crop row detection depends on recognizing spacing, alignment, and ground variation rather than visual contrast. With 3D CW iToF, rows appear as continuous depth structures formed by consistent distance measurements across soil, stems, and foliage.

Why does depth stability matter when agricultural robots move across large plots?

As robots move through a field, both distance and surface reflectivity change due to soil moisture, leaf texture, and plant density. These variations can introduce noise into depth measurements if the signal weakens over distance. Dual-frequency CW iToF improves signal quality across longer ranges as the robot transitions from one part of the field to another.

How does 3D iToF depth guide crop harvesting once the robot reaches the plant?

After navigation brings the robot into position, the task changes from wide-area perception to close-range measurement. At this stage, 3D iToF depth provides direct information about fruit height, separation, and approach distance. Since these measurements reflect actual geometry rather than visual estimation, harvesting actions remain aligned while the robot moves closer to the crop.

Why do illumination configuration and RGB pairing matter in smart agriculture workflows?

Field robots often require depth data both during approach and during close interaction, which places different demands on illumination. Multiple VCSEL configurations extend usable depth range for outdoor operation, while pairing depth with RGB adds visual context for crop identification.

Ram Prasad is a Camera Solution Architect with over 12 years of experience in embedded product development, technical architecture, and delivering vision-based solution. He has been instrumental in enabling 100+ customers across diverse industries to integrate the right imaging technologies into their products. His expertise spans a wide range of applications, including smart surveillance, precision agriculture, industrial automation, and mobility solutions. Ram’s deep understanding of embedded vision systems has helped companies accelerate innovation and build reliable, future-ready products.