Time-of-Flight (ToF) cameras generate depth maps by measuring the phase shift between emitted near-infrared light and its reflection. Each pixel records the shift, which is then converted into distance information. But raw depth maps tend to contain fluctuations caused by sensor noise, surface reflectivity, and ambient light.

Such irregularities manifest as jitter, blurred edges, or scattered point cloud artifacts, which reduce the quality of depth information.

While system-level parameters such as modulation frequency, illumination strength, and exposure duration improve the raw signal, they can’t fully remove residual noise. It is where spatial filters become crucial.

In this blog, you’ll learn how spatial filters work, the algorithms used in ToF cameras, and their impact on enhancing depth quality.

How Spatial Filtering Works

Spatial filtering reduces pixel-level variance across local neighborhoods. A single pixel rarely contains enough information to provide a stable measurement, especially when surfaces exhibit low reflectivity or when ambient interference is present. Considering the values of surrounding pixels helps reduce outliers and creates smoother transitions across a depth map.

The principle involves applying a mathematical kernel across a defined area around each pixel. The kernel dictates how neighboring pixels influence the value assigned to the center pixel.

Depending on the algorithm, the kernel may weight closer pixels heavily, integrate differences in amplitude or phase, or select the median value outright. The outcome is a depth map that suppresses noise without discarding structural details entirely.

Types of Spatial Filters

1) Gaussian filter

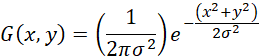

A Gaussian filter is one of the most widely implemented techniques for smoothing depth data. The weighting function is radially symmetric and defined as:

The function gives stronger influence to pixels closer to the center of the kernel, tapering smoothly as distance increases. In practice, 3×3 or 5×5 kernels are commonly used.

The filter may be applied to the phase map prior to depth conversion or executed directly on the depth map.

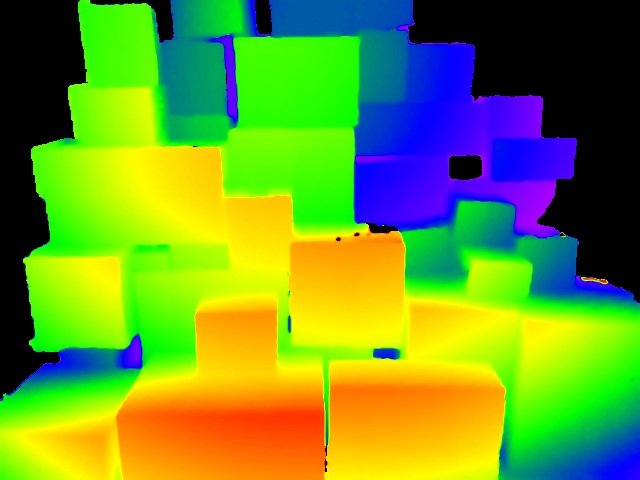

The computational process involves multiplying each pixel and its neighbors by the pre-computed weights, summing the results, and then normalizing by the total weight. It produces a blurred version of the original map that reduces random fluctuations across large surfaces.

While contrast at edges decreases, the global smoothness of the point cloud improves, which is helpful for object dimensioning or volume estimation.

The 2D and 3D images of the Gaussian filter are shown below.

2) Bilateral filter

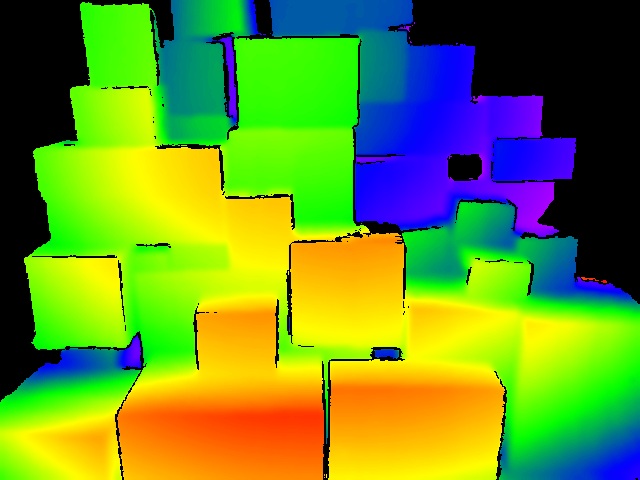

The bilateral filter extends the concept of Gaussian filtering by incorporating amplitude differences into the weighting function. Two pixels may be spatially close but belong to distinct surfaces with differing depth values.

- A simple Gaussian kernel would blend them together, softening the edge

- A bilateral filter assigns a lower weight to neighbors with large phase differences

In ToF systems, amplitude maps serve as a strong reference for intensity values. The filter uses these amplitude differences in combination with spatial proximity to refine depth maps. Neighbors with similar amplitude and phase values contribute strongly, while those with sharp discontinuities have less influence. It makes sure that structural edges, such as the boundary between an object and its background, remain sharp.

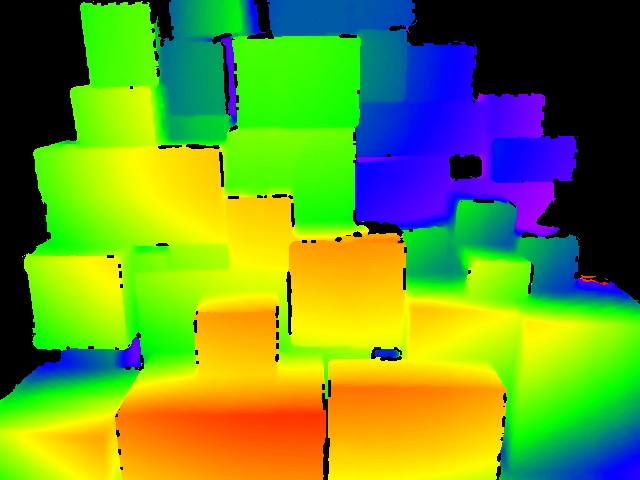

The 2D and 3D images of the bilateral filter are shown below.

The real-world implementation of the filter can be seen in our latest DepthVista-Helix 3D camera, which delivers accurate depth measurements while preserving sharp structural edges.

3) Spatial median filter

The spatial median filter takes a different approach. Instead of weighting or interpolating pixel values, it selects the median from a neighborhood. So, the chosen value always corresponds to an actual measurement rather than an interpolated figure.

The kernel size, typically 3×3 or 5×5, defines how many neighbors are considered. The median operation suppresses extreme outliers effectively. For instance, a single pixel affected by reflection artifacts or sensor noise will have little influence if the surrounding neighborhood is stable. This reduces salt-and-pepper type noise without blurring edges as much as Gaussian filtering might.

Spatial median filters are less computationally intensive than bilateral filters, though heavier than Gaussian smoothing.

The 2D and 3D images of the spatial median filter are shown below.

Benefits of Using Spatial Filters in ToF Cameras

Reduction of frame-to-frame jitter

Spatial filters suppress random fluctuations across neighboring pixels, reducing the temporal instability that appears as jitter in point clouds. Depth data becomes smoother between consecutive frames, which improves visualization and lowers the risk of measurement drift in static environments.

Improved surface continuity

Large planar objects and smoothly curved structures appear fragmented in raw depth maps due to local pixel variance. Applying Gaussian smoothing reduces these inconsistencies and yields surfaces that are uniform and geometrically coherent. The outcome is improved accuracy in dimensioning tasks, industrial inspection, and volumetric analysis, where continuity across a surface is essential for correct interpretation.

Preservation of boundaries

Many ToF camera-based systems require edge clarity, such as object detection or segmentation in cluttered scenes. Bilateral filtering incorporates spatial proximity and intensity differences, which ensures that pixels from distinct surfaces are less likely to be blended. It preserves sharp transitions at boundaries, producing depth maps with well-defined edges that retain structural integrity even under noisy conditions.

Mitigation against outliers

Multipath reflections, low-reflectivity materials, or transient sensor artifacts generate isolated outlier pixels that corrupt depth maps. Median filtering neutralizes these outliers by choosing the middle value of a neighborhood rather than computing weighted averages.

It eliminates spurious spikes without blurring nearby data, leading to stronger resilience in environments with reflective or variable surfaces.

Flexibility of usage

Different operational contexts demand different trade-offs between smoothness, edge retention, and computational load. Gaussian filters offer simplicity and speed, bilateral filters enhance edge preservation, and median filters target outlier suppression.

Developers can adjust kernel sizes, weighting factors, and filter combinations to optimize performance for their system resources and application demands so that ToF cameras can deliver depth data tuned for downstream use cases.

e-con Systems Offers Time-of-Flight Cameras with Spatial Filters

Since 2003, e-con Systems has been designing, developing, and manufacturing customizable OEM camera solutions. Our ToF cameras operate with NIR wavelengths at 940nm and 850nm to deliver accurate 3D imaging in indoor and outdoor scenarios. They come with interface support for USB, MIPI, and GMSL2 – and come with SDK compatibility for NVIDIA Jetson AGX Orin, AGX Xavier, and x86 platforms.

Our camera portfolio features an iToF GMSL2 Camera and a 3D Depth (CW iToF) USB Camera based on onsemi’s AF0130 CMOS ToF sensor, which uses the bilateral filter to acquire high-resolution depth images without compromising sharp edge.

Use our Camera Selector to check out e-con Systems’ full portfolio.

If you require expert assistance in deploying the best-fit ToF camera into your vision system, get in touch with us at camerasolutions@e-consystems.com.

Frequently Asked Questions

- What role do spatial filters play in ToF cameras?

Spatial filters smooth pixel-level inconsistencies by considering local neighborhoods instead of isolated values. They reduce jitter and noise in depth maps, which leads to cleaner point clouds for use in robotics, inspection, and mapping.

- How does a Gaussian filter improve ToF depth data?

A Gaussian filter applies a weighted average across neighboring pixels using a radially symmetric function. Neighbors closer to the center pixel contribute strongly, reducing random fluctuations and producing smoother surfaces across large regions.

- Why is a bilateral filter valuable for edge preservation?

A bilateral filter integrates spatial proximity and phase differences when calculating pixel weights. The method reduces blending across depth discontinuities, which preserves sharp edges and maintains clear boundaries in depth maps.

- When should a spatial median filter be used?

A spatial median filter is ideal for environments where multipath reflections or sensor artifacts introduce outliers. It selects the median value from a neighborhood, eliminating extreme deviations while keeping depth data consistent.

- How is the choice between spatial filters made?

The decision depends on system priorities and processing resources. Gaussian filters are fast and straightforward for general smoothing, bilateral filters are best where edge clarity is critical, and median filters work well when outlier suppression is the main goal.

Prabu is the Chief Technology Officer and Head of Camera Products at e-con Systems, and comes with a rich experience of more than 15 years in the embedded vision space. He brings to the table a deep knowledge in USB cameras, embedded vision cameras, vision algorithms and FPGAs. He has built 50+ camera solutions spanning various domains such as medical, industrial, agriculture, retail, biometrics, and more. He also comes with expertise in device driver development and BSP development. Currently, Prabu’s focus is to build smart camera solutions that power new age AI based applications.